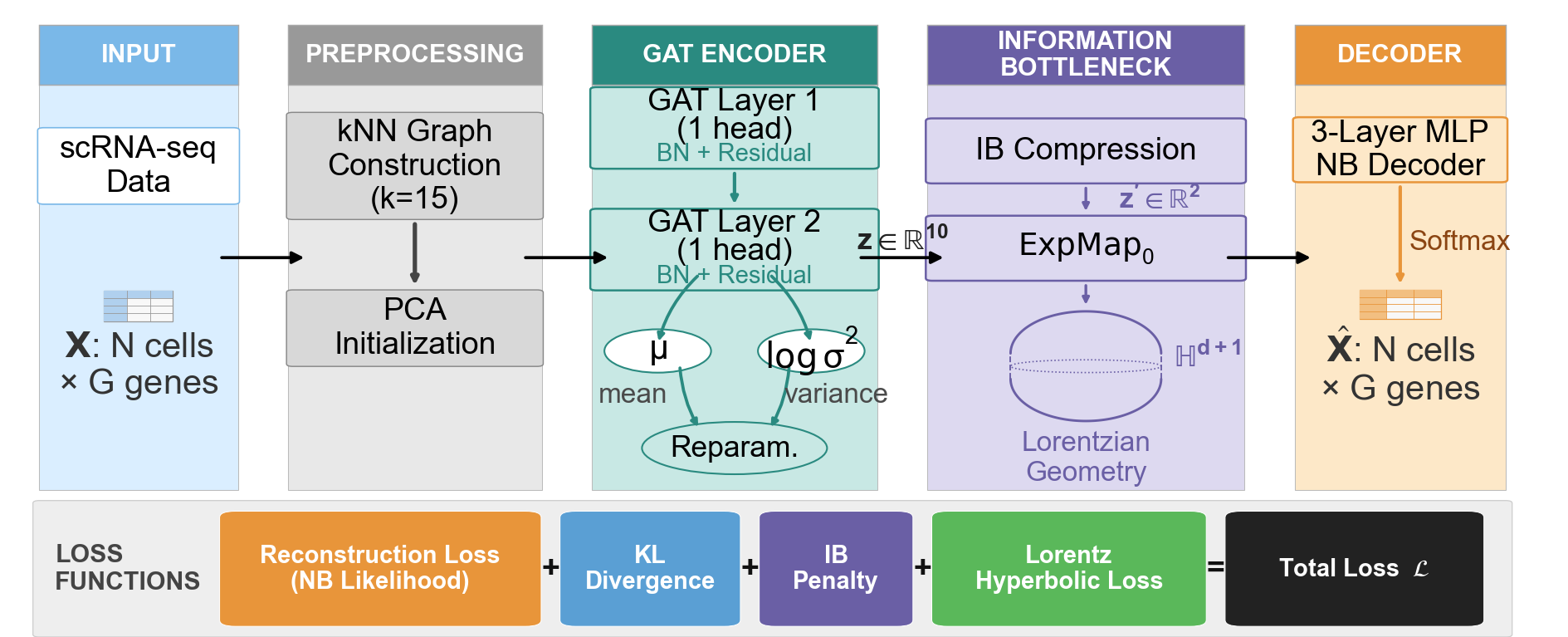

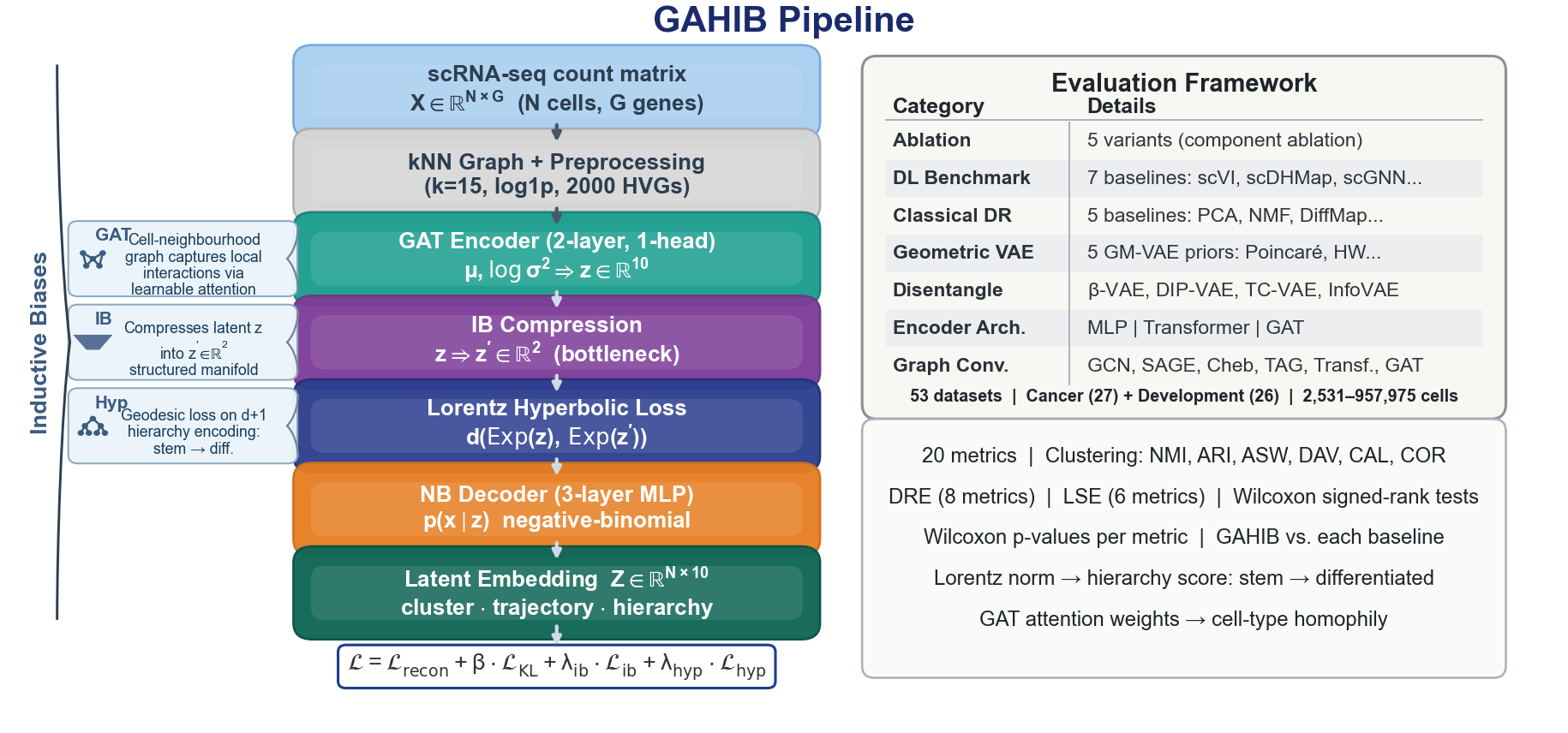

Architecture

Method

Encoder options: MLP, Transformer, GAT, GCN, GraphSAGE, ChebConv, TAG, GraphTransformer, ARMA.

Detailed benchmark figures remain gated; architecture and objective details are safe preview content.

Mechanism summary

Graph-attention VAE with bottleneck geometry

Encoder

Graph-aware counts

A graph or sequence encoder maps single-cell counts into a shared latent representation.

Bottleneck

Compressed manifold

A low-dimensional coordinate is trained directly instead of relying only on post-hoc projection.

Geometry

Lorentz hierarchy

The hyperbolic objective encourages radial hierarchy and angular lineage separation.

Objective

Three coupled losses

Reconstruction. A count likelihood - negative-binomial, zero-inflated negative-binomial, Poisson, or zero-inflated Poisson - fits the raw counts.

Information bottleneck. A second decoder reconstructs the input from a 2-D manifold coordinate, compressing the latent into a low-dimensional representation suitable for visualization and trajectory analysis.

Lorentz hyperbolic loss. Anchors the manifold coordinate on the hyperboloid model of hyperbolic space. Radial distance from the origin encodes hierarchy: cells closer to the origin sit higher in the developmental tree, and lineage divergence maps to angular separation.

Lorentz distance

The Lorentz distance between two points on the hyperboloid is

where the Minkowski inner product is

Reference diagrams

Manuscript figures

Important constraint

The hyperbolic loss is degenerate when the bottleneck coordinate is untrained. The implementation enforces this dependency when the bottleneck reconstruction weight is zero.

Optional trajectory modules

Stochastic differential equation and partial differential equation modules are provided for trajectory experiments. These are off by default and documented in the source.

Detailed quantitative comparisons against baselines, ablations, and interpretability analyses appear in the manuscript and will be published on this site upon journal acceptance.

Continue